Traditionally, IT environments were high-trust: Organizations had a low number of certificates and keys, all of which were contained, making them easy to manage in a static, reactive way.

Today, things look pretty different. Most organizations now operate in a multi-cloud world with dynamic workflows that create a significant amount of keys and certificates to manage. This situation has led to the rise of zero trust environments that require proactive, agile certificate management.

Microsoft Azure and Amazon Web Services (AWS) are two of the primary cloud services used in organizations today that have shaped what modern IT organizations look like. And while they offer numerous benefits, they also make it more challenging to maintain visibility and control over a large number of highly dynamic certificates. What options does this situation leave organizations?

Ryan Yackel, VP of Product Marketing, and Brian Taricska, Senior Solutions Engineer, recently discussed the challenges created by this dynamic, multi-cloud environment and how integrating Keyfactor Command with Azure Key Vault and AWS Certificate Manager can help create value.

Certificate Management Challenges in a Highly Dynamic, Zero Trust Environments

Today’s highly dynamic, zero trust environment has created several challenges when it comes to certificate management. Organizations find they lack visibility and control over their certificates and key stores at the highest level, which makes essential activities like reporting and expiration alerts difficult. Additionally, many organizations struggle to introduce consistent processes across multi-cloud vendors, leaving them to rely on manual, error-prone processes.

How did we get here? In general, four critical elements of today’s environment have created these challenges:

- More certificates: The volume of keys and certificates continues to expand every day

- Less control: Teams now issue certificates without any oversight by InfoSec

- Shorter life cycles: Short-lived certificates increase the risk of outages and double IT workloads

- No DevOps standard: No easy way to create consistent DevOps certificate processes across multiple cloud vendors

Despite these challenges, enormous value still exists in the cloud — most notably, high scalability, reliability and uptime. Further, the reality is that the cloud is not going anywhere. Instead, organizations will continue using it more and more. In fact, Cisco found that cloud data centers will process 94% of workloads in 2021.

As a result, it’s important to get ahead of these challenges by introducing a central platform to manage these concerns and control all the keys and certificates issued with cloud providers like AWS and Azure. When done correctly, this control can provide the necessary level of visibility around keys and certificates, regardless of their issuing CA, and offer consolidated reporting of keys across multi-cloud instances, all from a centralized console. Finally, it can also help introduce consistent automation between multi-cloud vendors using a standard API set.

For example, a centralized solution for certificate lifecycle automation, like Keyfactor Command, can control and automate the issuance of keys and certificates to help:

- Improve compliance and control: Ensure certificates are compliant with organizational standards and always issued from a trusted CA

- Increase visibility: Discover certificates across various CAs, networks, applications, and devices

- Enhance reporting: Consolidate reporting of keys across multiple cloud instance or cloud providers by grouping certificates for monitoring and setting alerts for expirations

- Automate workflows: Automate certificate deployment to workloads and applications, and automate incident reporting, certificate renewals and installations

Integrating Keyfactor Command to Improve Certificate Automation

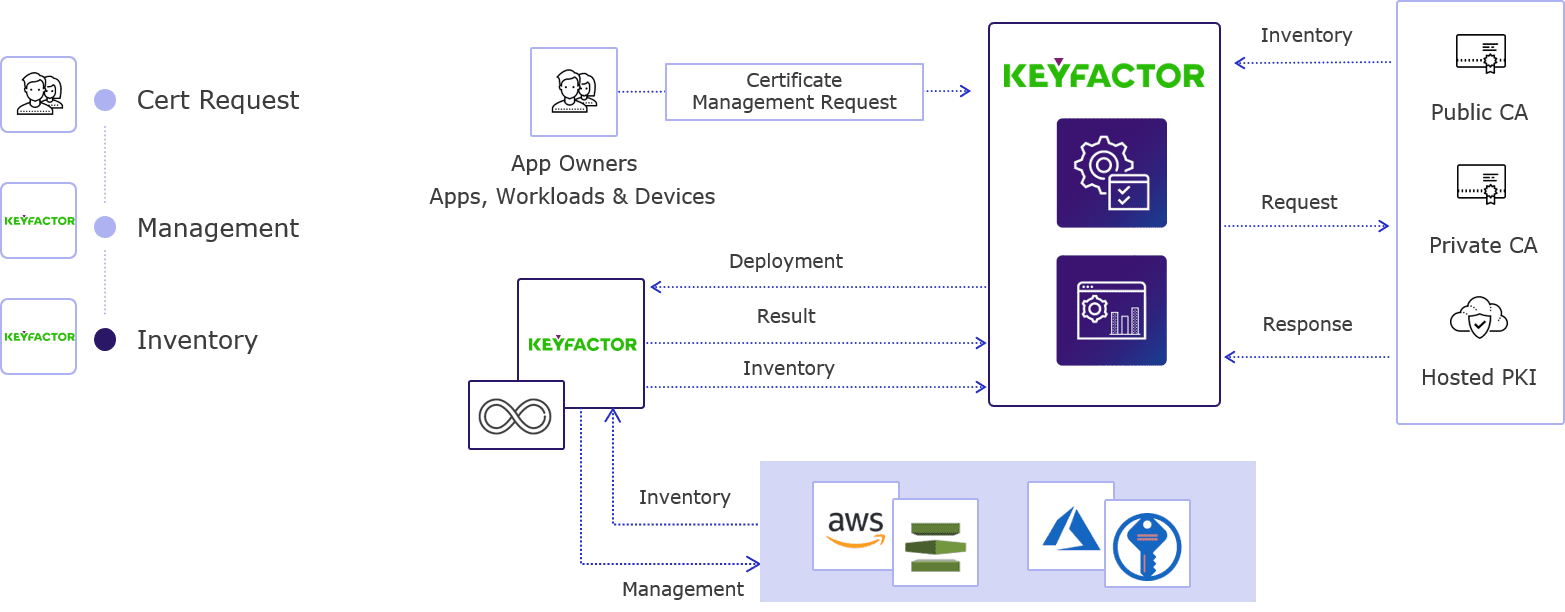

Before diving into the specifics of how Keyfactor Command works, it’s important to first understand at a high level how Keyfactor integrates with cloud systems like AWS and Azure for certificate requests and key store inventories to provide centralization for several different API integrations and workflows.

Keyfactor offers a single interface, either through the GUI or the APIs, for enrolling a certificate from a number of sources. In practice, this means that from a single request users can specify any target CA, whether that’s a public CA, a private CA or an ephemeral secret CA (like HashiCorp Vault) that ties into DevOps use cases.

You can simply use Keyfactor to deploy a certificate to a single location or multiple locations with a single API call or click of a button, and you can even add LCNs to this request. As a result, you can efficiently distribute the same certificate across multiple endpoints using different DNS or IPs and enforce various policies (e.g., RFC 2818) along the way. Keyfactor also offers several integrations with popular platforms, including service meshes and Kubernetes-type deployments, that you can use within the AWS and Azure-based infrastructure.

Importantly, Keyfactor also considers the common DevOps dilemma of speed vs. security, in which developers need certificates quickly and InfoSec teams need to make sure those certificates are compliant. Specifically, Keyfactor enables developers to quickly access certificates, including procuring and provisioning them from compliant CAs while providing the InfoSec team with the level of visibility they need in terms of what gets issued and ongoing monitoring alerting.

How it All Comes Together: A Look Inside Certification Automation Workflows with Keyfactor

Let’s bring this all down to reality with a look inside how all of this comes together to power certificate automation workflows within Keyfactor.

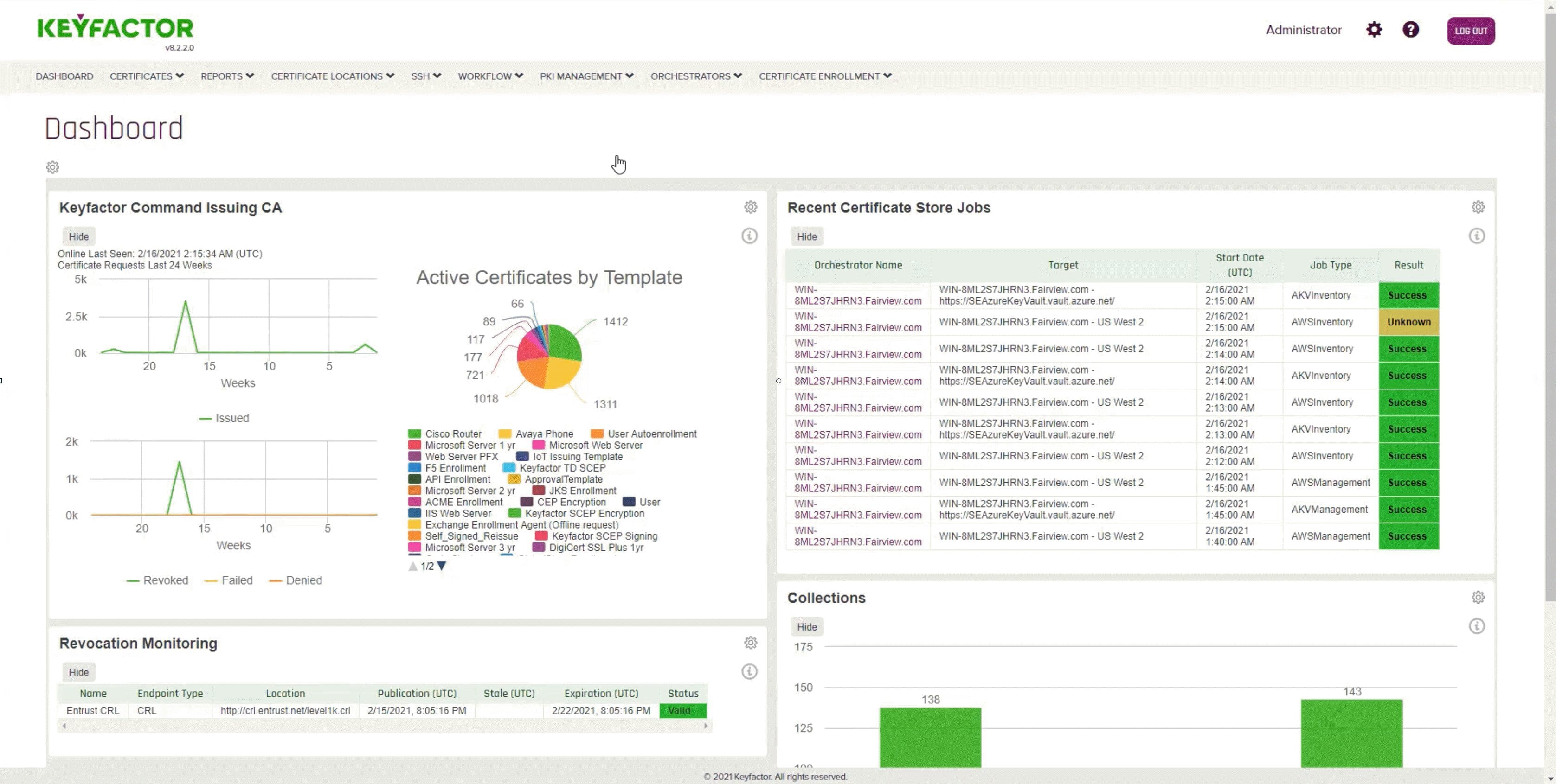

Step 1: Log in to Keyfactor Command

Logging in to Keyfactor takes you right to a dashboard that offers high-level monitoring into your PKI environment. Some of the most important things you see in this view include issuing CA details (which you’ll need for any certificates requests) and certificate store jobs that represent inventory on AWS and Azure Key Vault instances.

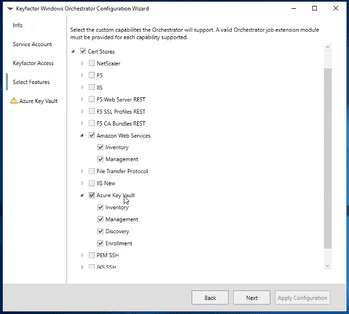

Step 2: Set Up Requirements

Once you’ve logged in, you need the Orchestrator to actually integrate into your AWS and Azure Key Vault instances. You can deploy these instances locally, remotely or even as a distributed model. Setting up everything simply requires sharing information about your installation and the certificate stores you’re enabling (in the case of this demo, that would be AWS and Azure Key Vault). After registering everything through those steps, you can go back into Keyfactor to approve the integration.

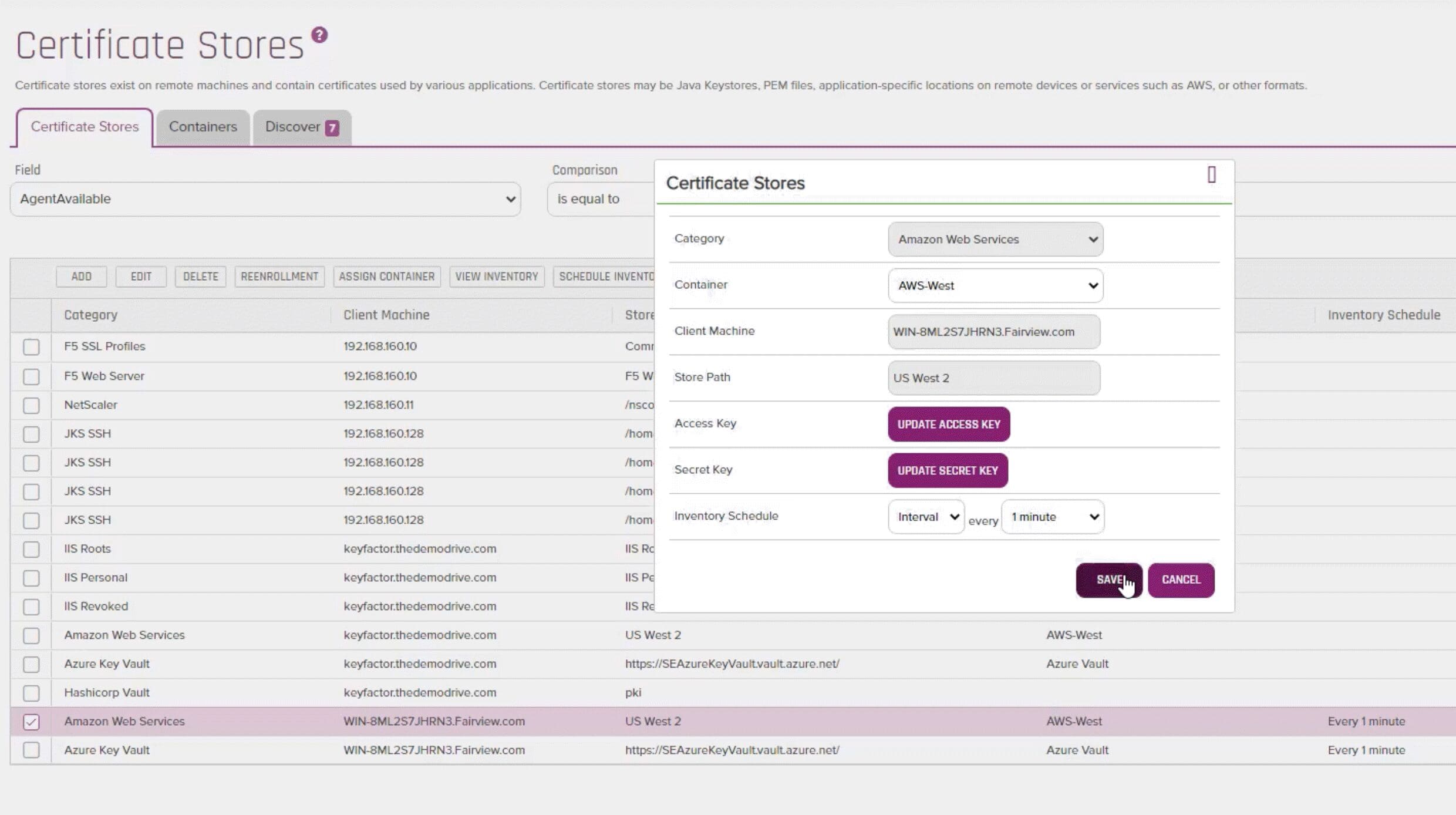

Step 3: Configure Your Certificate Store

Next, you can set up the certificate stores to tie into your AWS and Azure Key Vault instances. Keyfactor provides different ways to authenticate the instance and their inventories, for example through remote forests and client machines.

Once you tie into the certificate stores, you can not only provision and deprovision certificates, but also view the inventory of everything that’s been issued — regardless of whether those certificates came from inside or outside of Keyfactor. For example, Keyfactor will pull in everything, including self-signed certificates or those that come from CAs with which Keyfactor isn’t integrated, so that you have visibility into everything that exists in your environment.

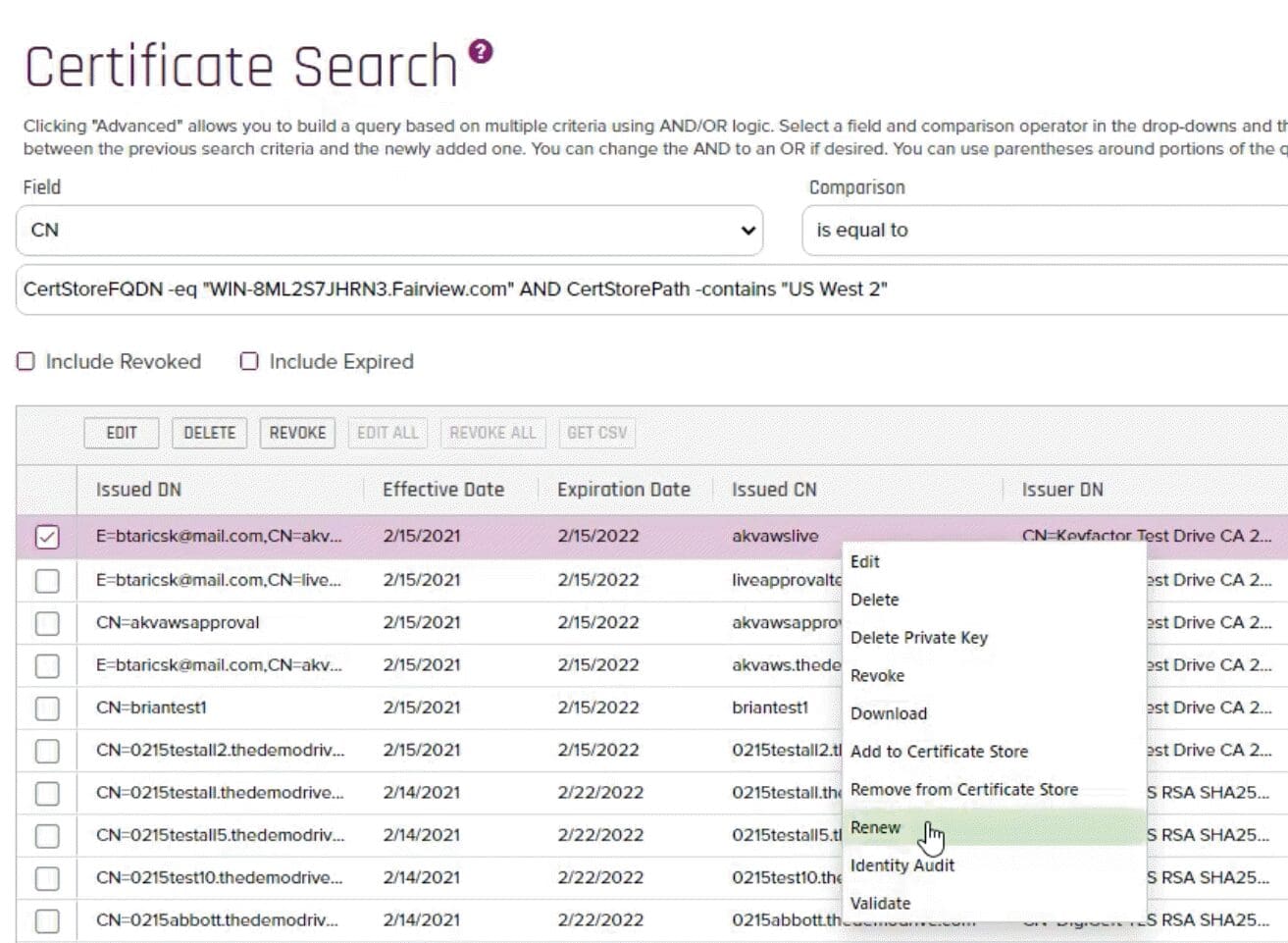

With that setup, you can easily validate certificates within Keyfactor and get visibility into details like:

- X.509 certificate properties

- Certificate subject

- Validity period

- Certificate usage

- Requester name

- Certificate location

- Full audit history

On top of all that, you can also right-click to renew any certificate.

Step 4: Enrollment Provisioning

After standing up the certificate store, it’s time to start enrolling certificates. This process is as simple as filling out the common name of the certificate for PFX certificates. Keyfactor will auto-populate the rest of the fields, including the DNS, which gets enforced at the CA level through Keyfactor’s compliance settings. From there, you can specify with a couple clicks what you want the target to be (e.g. AWS or Azure Key Vault). And that’s it — all in, the setup, installation and provisioning takes just minutes, if not seconds, to do with Keyfactor.

All of this puts a ton of visibility at your fingertips. You can easily see scheduled jobs for new certificate requests as well as a complete job history.

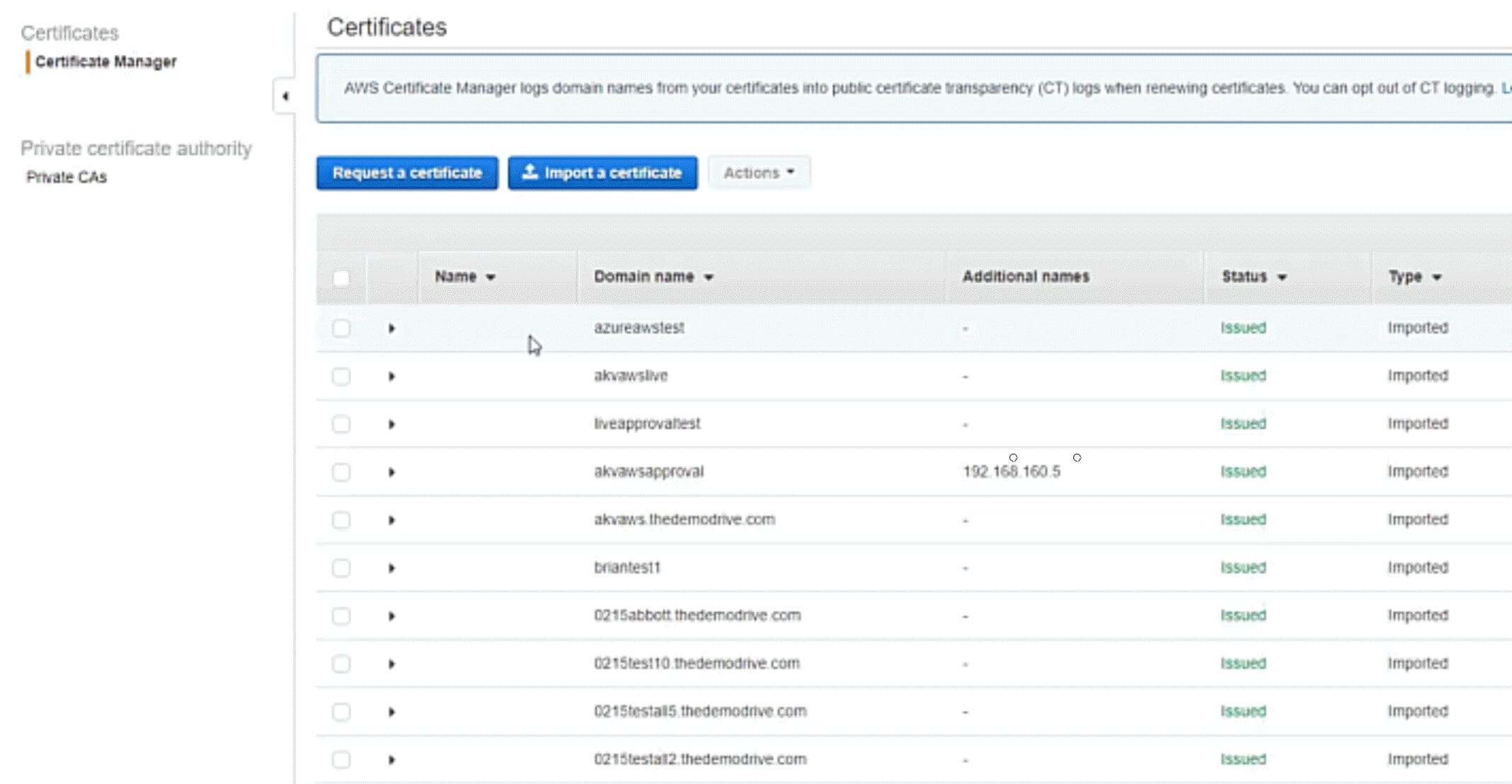

Each certificate will also show a status (which you can also double check by going into AWS or Azure Key Vault to make sure you see any completed certificates), where it was deployed, the requester name and any additional information, such as metadata to tag the certificate (a field that’s proprietary to Keyfactor).

Step 5: Create Collections

Finally, given all these nuances, the greenfield of regulations, and an overall lack of standardization, getting IoT security right is not straightforward. This is especially true. IoT security is quite different from traditional enterprise security and a new area even for deep domain expertise from building these devices.

The best way forward is to take it step-by-step, first looking closely at the type of security architecture that will work best for your devices, company, and customers’ unique needs. From there, you can start evaluating the different technologies available for key elements like authentication, encryption, code signing, and so on. Along the way, one best practice to keep in mind is to use already-built solutions that can help with the fundamental lifecycle stages of IoT devices wherever possible, as that allows you to tap into a wealth of knowledge and experience versus building something on your own.

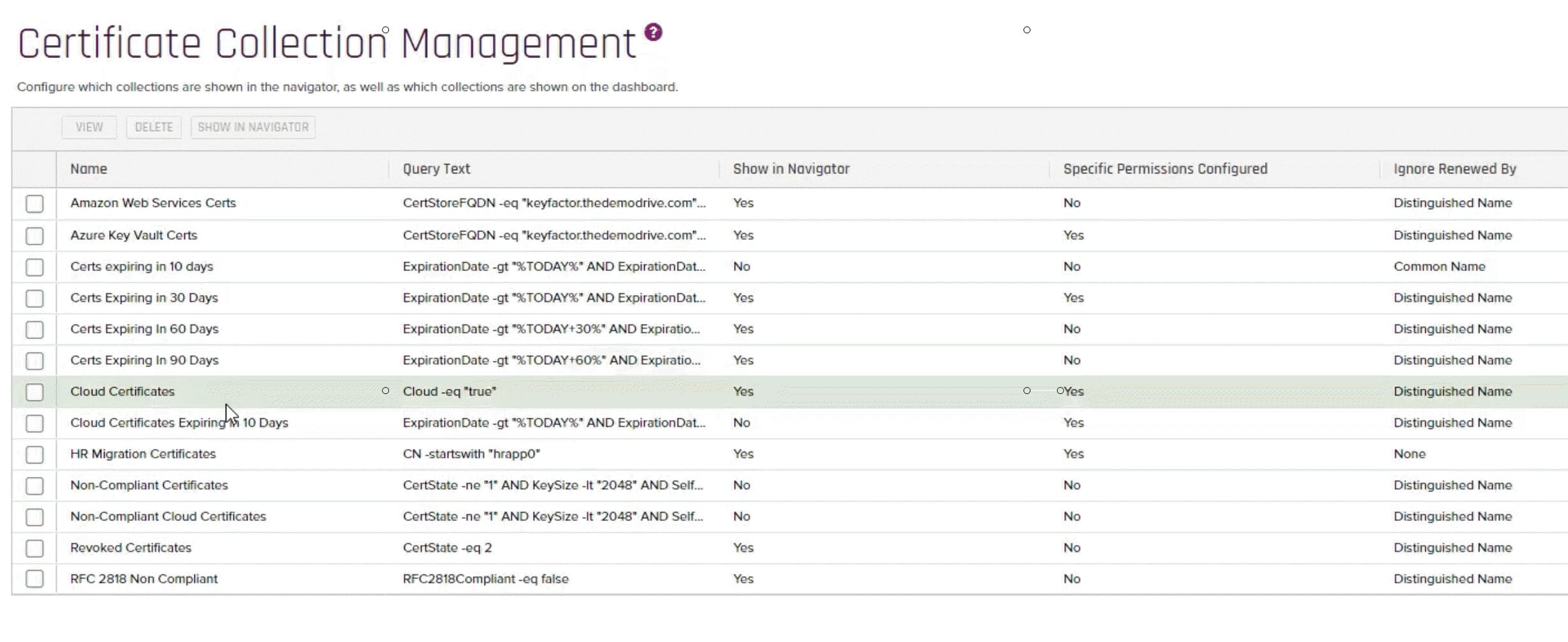

Finally, you can create collections, which group together certificates that have commonalities with one another. Collections are the workhorse behind the platform, as you can get extremely flexible in how you define them and then activate them in a number of ways.

For instance, you can give various groups permission to do things with certificates in a specific collection and schedule reports on a subset of certificates to a collection. You can also view details at a glance on all the certificates in a collection, including certificate metadata, expiration dates and health metrics.

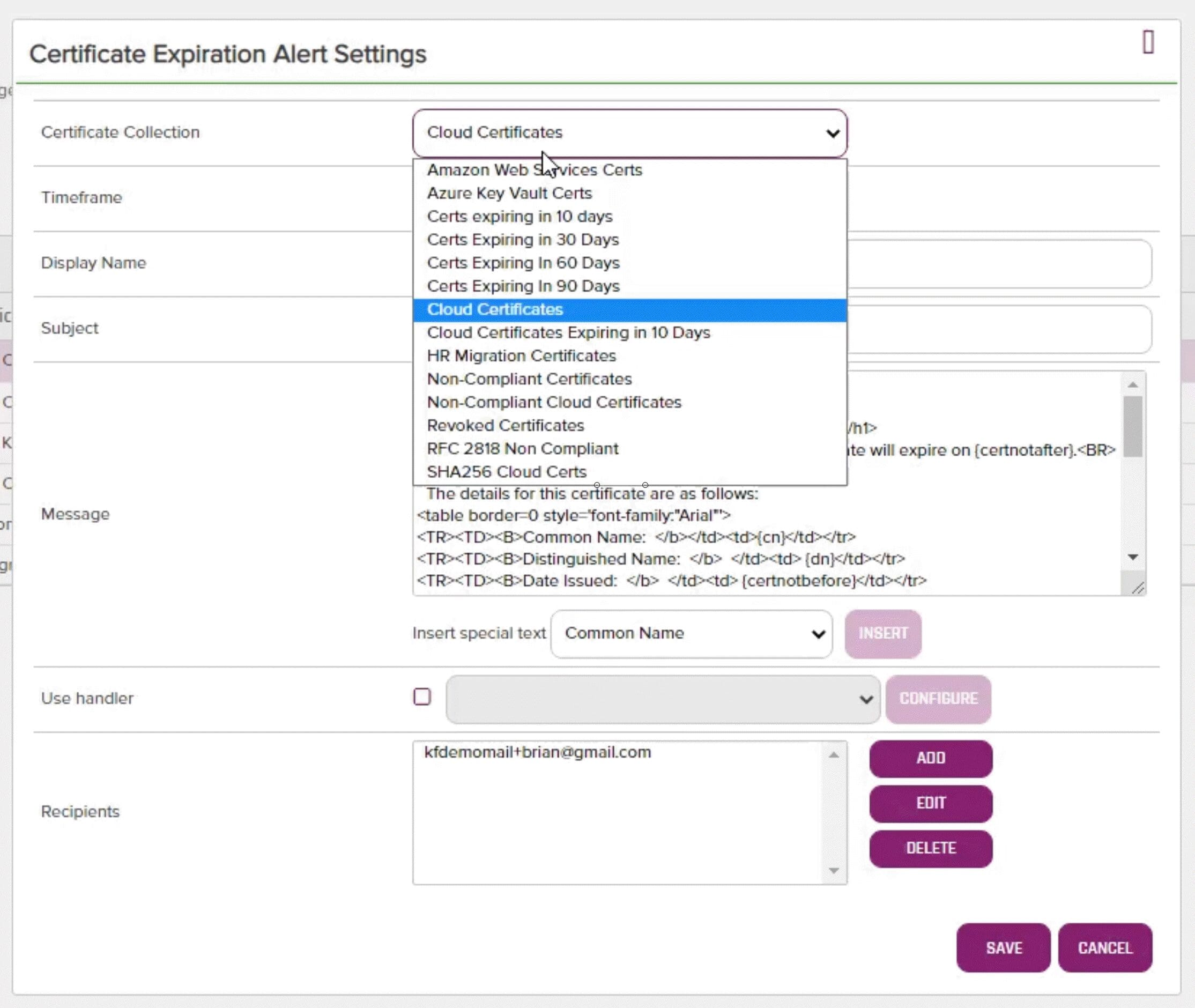

One of the most important elements of these collections is that they create dynamic reports. Combined with Keyfactor’s automation, this gives your team unprecedented visibility and control. For instance, you can introduce logic that instructs Keyfactor to alert designated recipients 10 days before any certificate in a certain collection expires.

This not only automates alerting on certificate expirations to ensure your team can be proactive in renewing them, but also delivers control and flexibility to enable you to alert the right team members.

Interested in Learning More?

To learn more about how Keyfactor Command solves common certificate automation and management challenges in a multi-cloud world and see a live demo, watch the full webinar here.